Selecting the wrong containment equipment supplier on an EPC project rarely shows up as a problem at bid stage — it shows up during document review, FAT sign-off, or late-stage OQ when the validation team starts asking questions the vendor cannot answer without several rounds of clarification. The practical consequence is not just schedule pressure; it is that the EPC team absorbs the cost of compensating for documentation gaps through supplemental testing, reissued drawings, and delayed qualification closure — costs that can easily exceed any initial price saving on the equipment itself. The friction point is structural: procurement compares line items while engineering is quietly assessing whether the vendor can survive a full documentation audit, and those two filters rarely produce the same shortlist. Reading this article will help you identify which vendor capabilities and behaviors are genuinely predictive of validation success — and which signals in a bid package suggest that qualification risk is about to be transferred to your team.

Capabilities that separate a qualified BIBO vendor from a fabricator only

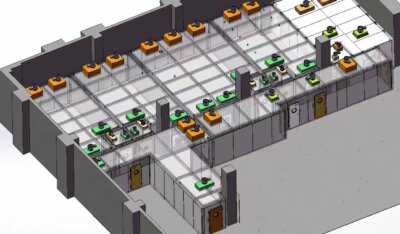

The most important distinction between a qualified BIBO vendor and a fabricator is not product quality in isolation — it is whether the vendor understands how their equipment fits into a validated containment system and can support that system through the qualification lifecycle. A fabricator can produce housing that meets dimensional drawings. A qualified vendor can also account for pressure differentials, airflow interaction with adjacent ventilation zones, structural loading at bag-change events, and the interface requirements that downstream commissioning engineers will need to resolve. That depth of system-level thinking is what prevents integration fragmentation during construction.

R&D heritage matters here in a specific, practical way. Vendors with an engineering lineage in containment technology have already encountered and resolved the failure modes that surface in biopharmaceutical environments — bag-out event pressure transients, HEPA seating variability under cyclic loading, door interlock logic under filter change conditions. A vendor without that history may produce compliant hardware while still missing the performance nuances that only become visible after installation. This is difficult to detect from a product catalog but tends to surface quickly when you ask how the vendor’s system has been verified under conditions resembling your process.

The decision implication is this: before bid review, confirm whether the vendor offers an integrated system design or component-level supply. If the answer is the latter, your engineering team is effectively taking on system integration responsibility — a workload that rarely appears in project scoping but consistently affects commissioning duration. Treating this as a commercial preference rather than a technical qualification criterion is one of the more common early errors on EPC projects that include complex containment requirements.

Drawing quality and requirement traceability during bid review

Drawings that look adequate at bid stage can still fail documentation review once the project enters DQ and IQ phases. The specific failure mode is not missing dimensions or incorrect materials — it is the absence of traceability between the vendor’s design assumptions and the project’s user requirements specification. When a reviewer cannot follow a line from a URS requirement through a design basis to a specific drawing detail, that gap becomes a clarification cycle. On projects with compressed schedules, repeated clarification rounds compress the time available for IQ preparation.

Component compatibility is a related and underestimated risk. When a vendor’s drawing package references components from multiple sourcing streams without explicit compatibility confirmation — instrumentation, seals, filter housings, access frames — the burden of resolving interface conflicts typically falls on the EPC team during construction. In sterile manufacturing contexts, EU GMP Annex 1 places significant weight on documented design control and traceability, not because it prescribes drawing formats, but because it treats the integrity of the design record as foundational to demonstrating that contamination risk has been systematically addressed. A vendor whose documentation practice is inconsistent with that expectation creates audit exposure that cannot be closed by substituting test data.

The practical check during bid review is straightforward: request a sample drawing package from a recently completed comparable project and ask explicitly how design changes are controlled and reflected in the as-built record. Vendors who respond with a defined change management process and redline procedure are structurally different from those who treat the drawing as a static deliverable. That difference determines whether your qualification team will be reviewing evidence or reconstructing it.

You can find a more detailed breakdown of documentation criteria in the BIBO Manufacturer Evaluation guide, which covers quality and traceability assessment in comparable project contexts.

FAT evidence and calibration support that validation teams need

A factory acceptance test is only as useful as its documentation. If a vendor’s FAT package consists of a signed checklist without defined acceptance criteria, instrument calibration records, or test protocols that map back to functional requirements, the validation team will need to develop a compensating strategy before OQ — either by conducting supplemental testing on-site or by negotiating acceptance criteria retroactively, both of which carry schedule and cost consequences.

ASTM E2500-22 provides a useful reference framework here: it structures verification activity around risk-based approaches that tie test scope to the criticality of system function, and it expects that acceptance criteria be established before testing begins rather than inferred from results. Vendors who align their FAT structure to that logic — even if they do not explicitly invoke E2500 — tend to produce packages that validation teams can work with directly. Vendors who treat FAT as a shipping condition rather than a qualification event tend to produce packages that document what was tested but cannot demonstrate that what was tested was sufficient.

Calibration support compounds this issue. Pre-wired and pre-tested units delivered with traceable instrument calibration records reduce on-site qualification labor and lower the risk of field discrepancies. The trade-off becomes visible when comparing a higher-cost vendor who delivers a complete, calibration-current package against a lower-cost vendor who delivers hardware that requires re-calibration and instrument verification before IQ can proceed. The second scenario is not inherently disqualifying, but it needs to appear explicitly in the project schedule and cost model — not as an assumption that the EPC team will absorb it quietly.

Post-installation support is a related concern. Vendors with local service infrastructure can respond to calibration drift, instrument replacement, or post-FAT warranty questions within timeframes that align with project milestones. Vendors without that infrastructure create a single point of delay when a calibration question arises during OQ and the response time is measured in weeks.

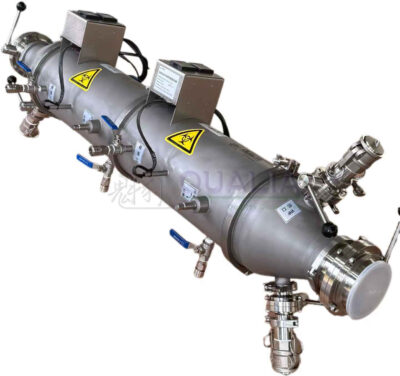

Weld quality, finish control, and bagging ergonomics to inspect

Weld quality in containment housings is not purely an aesthetic criterion — it affects cleanability, surface integrity under repeated decontamination cycles, and the long-term mechanical reliability of the housing under differential pressure conditions. The inspection criteria that experienced reviewers apply during physical audits include weld continuity and surface profile at internal corners, consistency of surface finish in product-contact and near-product zones, and the absence of crevices or mechanical dead zones that create cleaning and validation challenges.

Surface finish specification deserves more attention than it typically receives in procurement. Ra values matter, but so does the consistency of finish at welds, transitions, and access openings. A housing with a well-specified internal Ra that shows mechanical tooling marks or inconsistent passivation at weld joints creates two problems: a direct cleanability concern and a documentation problem, because the as-built finish record needs to reflect what was actually delivered. When it does not, validation teams face a choice between accepting a deviation or requiring rework at a project stage where neither option is convenient.

Bagging ergonomics is the criterion most commonly deprioritized at bid stage and most frequently regretted during commissioning and routine operation. The bag-in/bag-out changeout sequence requires that the operator maintain containment integrity through a series of physical manipulations in a confined workspace. Housing geometry, port positioning, bag attachment design, and visual access during the changeout all affect whether the procedure can be performed reliably by trained personnel under realistic working conditions. Vendors who have conducted human factors review or documented ergonomic testing of their changeout procedure are in a fundamentally different position from those who have not — and that difference becomes operationally significant the first time a changeout is performed under field conditions rather than in a demo environment.

The practical inspection approach is to request a live bag changeout demonstration during vendor qualification. The demonstration will reveal port accessibility, bag tensioning behavior, and whether the sequence can be performed without requiring unusual physical force or awkward positioning. If that demonstration is unavailable or deflected, treat it as a signal about how seriously the vendor has stress-tested their own procedure.

Project-support behaviors that reduce EPC coordination risk

Documentation responsiveness during active project execution is one of the more reliable predictors of how a vendor will perform during qualification. A vendor who routinely closes drawing comments within agreed timelines, issues NCR responses with root cause analysis rather than corrections only, and proactively flags scope changes before they affect the critical path has demonstrated a project-management behavior pattern that reduces EPC coordination load. A vendor who requires repeated follow-up, issues revision packages without change summaries, or treats deviation closure as a post-shipment activity creates a coordination overhead that accumulates across the project timeline.

Supply chain positioning is a practical planning criterion that belongs in vendor assessment even if it rarely appears in RFQ criteria. Vendors operating within established industrial clusters or with contracted supply chain relationships for critical components — filter housings, specialized seals, instrumentation — are better insulated from material availability disruptions than those with fragmented or single-source supply. On EPC projects with firm mechanical completion dates, a six-week lead time extension on a containment component can have disproportionate schedule consequences. This is not a compliance consideration; it is a logistics risk input that should be visible in vendor assessment.

Localized service infrastructure matters beyond installation. Time-zone-aligned technical support, regional spare parts availability, and accessible engineering contacts reduce mean time to resolution when field questions arise during commissioning or early operation. The distinction between a vendor who can connect you to a regional application engineer within a business day and one who routes every technical question through a central office several time zones away becomes concrete the first time a commissioning milestone depends on a quick answer. Experienced EPC teams factor this into vendor assessment even when the RFQ does not explicitly ask for it.

For projects that involve integrated facility design alongside containment equipment selection, understanding how BIBO systems are positioned within broader EPC scope helps clarify where vendor coordination risk concentrates — the EPC Capabilities overview provides useful context on how those interfaces are typically managed.

Vendor scorecard for technical, documentation, and qualification performance

A structured scorecard keeps vendor evaluation aligned across procurement, engineering, and validation functions — which is necessary because those functions weight criteria differently and will otherwise reach different conclusions from the same bid package. As noted in open-access research on biopharmaceutical facility validation, the complexity of qualification requirements in these environments justifies formal, structured vendor assessment rather than informal comparative judgment, because informal assessment tends to weight visible criteria like price and delivery while underweighting criteria that only surface during qualification.

A defensible scorecard for BIBO vendor assessment on EPC projects should cover at least five evaluation dimensions: technical heritage and R&D depth, documentation discipline and traceability practice, FAT structure and calibration support, supply chain verticality and regional logistics positioning, and post-award project-support behavior. Each dimension needs defined sub-criteria and a consistent scoring convention so that a vendor with a strong price position but weak documentation practice is evaluated against the same framework as a vendor with a premium price and a complete qualification package.

Two criteria that are frequently omitted from standard vendor scorecards deserve explicit inclusion. The first is modular scalability — whether the vendor’s system architecture can accommodate future process changes without requiring a complete equipment replacement — which affects long-term cost of ownership in ways that a unit-price comparison does not capture. The second is the vendor’s history of supporting qualification through OQ closure on comparable projects, including how they handled deviation documentation and acceptance criteria disputes. Both criteria require active inquiry rather than passive review of submitted materials, which means they only appear in the scorecard if someone on the evaluation team asks for them.

The scoring process should also include a threshold condition: if a vendor fails the documentation sub-criteria — incomplete FAT protocols, missing calibration records, absent material traceability — that failure should carry sufficient weight to offset a competitive price position. Treating documentation weakness as a correctable deficiency rather than a disqualifying signal is the decision pattern that leads to post-award rework cycles. The BIBO Supplier Selection Guide provides additional structure on how to organize this kind of vendor qualification process.

The core judgment this evaluation process needs to produce is not which vendor is cheapest or which product looks most capable in a brochure — it is which vendor is most likely to support your team from DQ through OQ without creating compensating work that your project schedule and budget did not account for. That judgment requires looking past line-item pricing and assessing documentation discipline, FAT rigor, calibration support, and post-award responsiveness as the criteria that actually determine qualification velocity.

Before finalizing any BIBO vendor selection for an EPC project, confirm three things explicitly: whether the vendor’s FAT package includes defined acceptance criteria tied to functional requirements, whether their drawing and change management practice is compatible with your validation team’s IQ/OQ documentation expectations, and whether their regional service infrastructure can support commissioning and early operational questions within acceptable response windows. These are the criteria that experienced reviewers treat as stronger discriminators than price — and they are the ones most likely to be absent from a standard RFQ if no one on the team makes them explicit.

Frequently Asked Questions

Q: What should we do if our procurement team has already shortlisted vendors based on price before engineering has reviewed documentation quality?

A: Reopen the shortlist using a weighted scorecard before issuing the RFQ response deadline — not after award. Procurement and engineering filtering on different criteria from the same bid package is a structural problem that scorecard alignment solves before it becomes a post-award rework problem. If the shortlist is already fixed, request a sample FAT package and drawing change log from each vendor immediately; documentation weakness identified at this stage is still recoverable, whereas the same weakness identified during DQ is not.

Q: At what point does a vendor’s documentation weakness become a disqualifying condition rather than a correctable deficiency?

A: When FAT protocols lack defined acceptance criteria, calibration records are absent or out of traceability, or material traceability documentation cannot be produced on request, that combination should be treated as disqualifying rather than correctable. Each gap in isolation may be addressable with supplemental effort, but a vendor who arrives at bid stage with all three missing has demonstrated a documentation practice that will not improve under project schedule pressure. The cost of compensating for that pattern through reissued drawings, supplemental testing, and delayed IQ closure routinely exceeds the price difference that made the vendor appear competitive.

Q: How does advice about vendor documentation discipline change for a fast-track EPC project with a compressed qualification schedule?

A: On compressed schedules, documentation discipline becomes more critical, not less, because there is no slack to absorb clarification cycles. A vendor who requires three rounds of comment resolution on a drawing package or issues FAT results without acceptance criteria forces your validation team to develop compensating strategies — retroactive acceptance criteria, supplemental field testing, deviation documentation — at the precise stage when schedule pressure is highest. Fast-track projects make documentation-weak vendors riskier, not more acceptable, and the threshold for disqualification on documentation grounds should move up accordingly.

Q: Is it worth paying a price premium for a vendor with stronger local service infrastructure if the project site is in a region where the vendor has limited presence?

A: Yes, if the alternative is routing every commissioning and calibration question through a central office in a distant time zone. The premium is worth assessing against a concrete risk: if a calibration drift or instrument question arises during OQ and the response time is measured in weeks rather than days, the milestone delay cost will typically exceed the service infrastructure premium many times over. The practical check is to ask each vendor for documented response time commitments and regional contact details — not general statements about global support — and to verify those commitments against reference project feedback from a comparable geography.

Q: Should modular scalability be weighted heavily in the scorecard even if the current project scope is fixed and no expansion is planned?

A: It should receive moderate weight as a tiebreaker criterion rather than a primary discriminator, unless the facility is in a therapeutic area where process changes are likely within the equipment’s service life. The practical reason is that a system architecture that cannot accommodate future filter configurations, pressure class changes, or access modifications will require full equipment replacement rather than modification — a cost that does not appear in the initial unit price comparison but materially affects total cost of ownership. If two vendors score equally on documentation, FAT rigor, and project-support behavior, modular scalability is a reasonable basis for final selection.

Related Contents:

- Comparing Biosafety Isolator Vendors: Top Tips

- How to Write a URS for a BIBO System in GMP and Biosafety Projects

- VHP Robot Vendor Selection | Procurement Decision Matrix

- Effluent Treatment System Vendors | Assessment Checklist | Qualification

- Calibrating Biosafety Isolators: Essential Steps

- BIBO Commissioning Checklist: FAT, SAT, IQ, and OQ Points That Get Missed

- Calibrating OEB4/OEB5 Isolator Monitoring Instruments

- EDS System Buying Guide | Vendor Selection | Price Comparison 2025

- Calibrating Bio-safety Isolation Dampers: Expert Guide